Six Feet Apart, Please!

Installation, virtual reality, Unity, Oculus Quest

A playful VR simulation mapped to the physical space about COVID-19’s social distancing and its influence on social interactions, made with Aidan Massie and Kyle Chang.

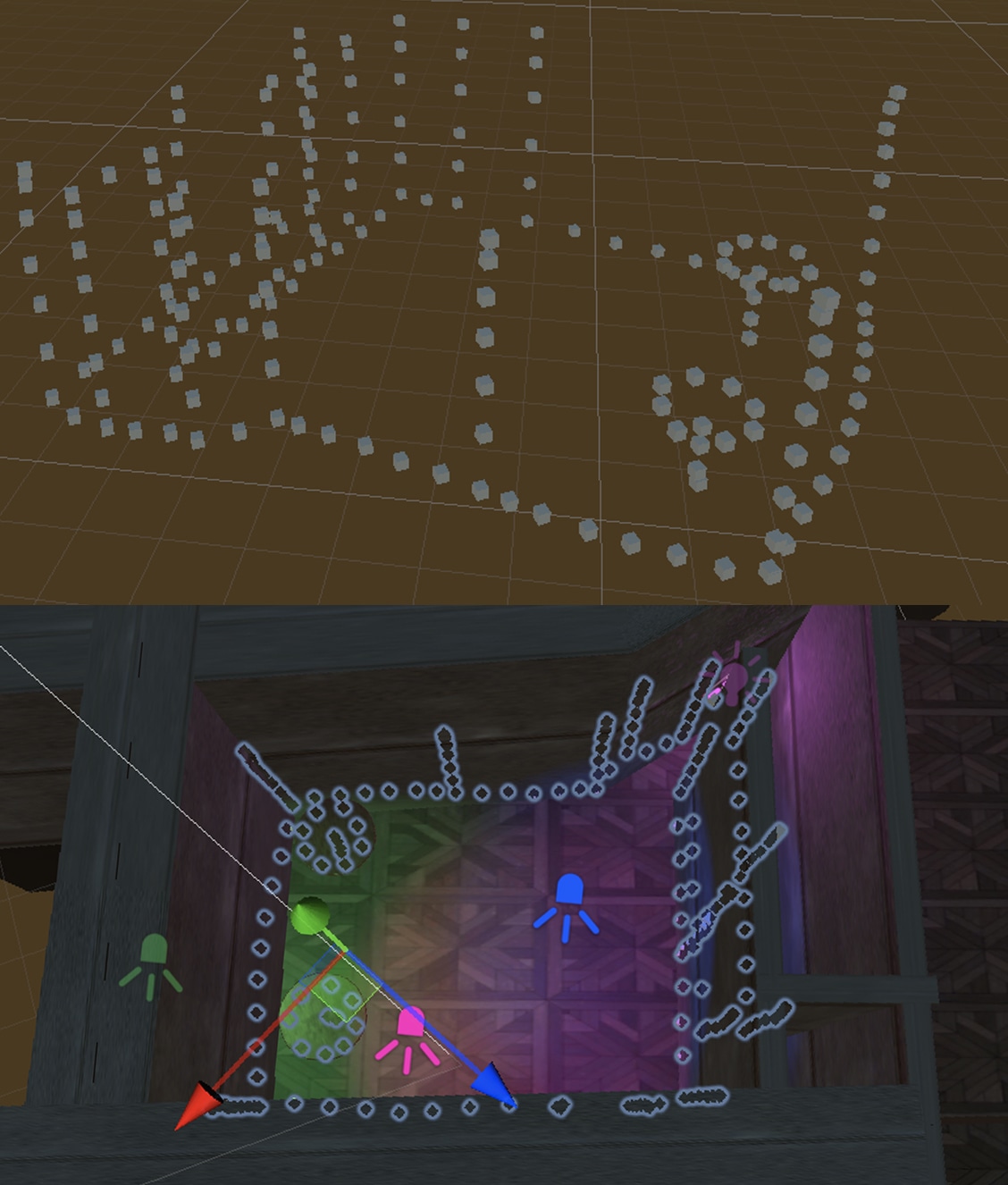

VIRTUAL REALITY VIEW AND PHYSICAL WOLRD VIEW

About the Project

Six Feet Apart Please! is a playful VR simulation about COVID-19’s social distancing and its influence on social interactions. The user enters a virtual club with other people, but they also walk through the physical world to explore the virtual world. In the headset, people are pushed away whenever the user breaks the six-foot standard for social distancing. Through the comical instances of the 3D scanned people being pushed away from the VR user, this project reflects on the anxiety of trying to meet new people again after being isolated for so long.

This project was made with Aidan Maisse and Kyle Chang. I worked in Unity to map the VR environment to the physical world through OSC and code the Oculus Quest locomotion and player interactions. Aidan created the VR environment and Kyle worked with the 3D scans.

MAPPING PHYSICAL WORLD TO VIRTUAL WORLD

Physical World to Virtual World

An essential part to Six Feet Apart, Please! is that the user’s virtual position is matched by the user’s position in the physical world. To ensure this happens, there are two key parts: 1) the virtual world must be mapped to the physical world perfectly, and 2) the VR locomotion must be controlled by the user walking around in the physical space, not by the controllers.

We quickly realised measuring the physical room and recreating it in Unity would not work because the virtual room ended up being bigger than the physical room. For our second try, I followed this tutorial by Emanuel Tomozei that allowed us to map out the physical room by using the Oculus Quest 2 and Unity. It involved using an Open Sound Control script that allowed the Oculus to communicate with Unity through WiFi.

To map out the room, someone had to wear the Oculus headset and hold both controllers. Then, they would place the controller at some point in the room, such as the ground or wall, and press the B button. This would create a cube that appeared where the controller is. The person would continue to trace around the floor, walls, and furniture in the room while pressing B. This would eventually generate an outline of the physical room in the virtual environment.

Using the generated map, we were able to recreate the physical room in Unity with the correct dimensions. By following the outlines, Aidan placed the virtual walls, doorway, ground, and tables at the right position with the right scale that matched the physical room.

DIFFERENT LOCOMOTION TESTS

Player Locomotion

The next step was making the VR user walk around in the virtual environment by walking in the physical space, not by using the VR controllers. Since this was my first time using VR, I decided to start with the sample locomotion scene from the Oculus Integration package. By using the PlayerController prefab, the locomotion scene allowed users to navigate through the scene with the controllers. Although this is not the method of moving we wanted, we used the PlayerController for a while to test other elements of the project, such as navigating through the virtual recreated room and testing interactions with the 3D scans.

After following the tutorial by Emanuel Tomozei as mentioned before, we were able to figure out how to walk through the virtual scene while walking in the real world. This was done by using the OVRCameraRig from the Oculus Integration package; however, once this was changed, we ran into a new problem with collisions.

COLLISION TESTS

Simulation Interaction

The interaction in Six Feet Apart Please! involves the VR user to walk up to 3D scans of people, and then the 3D scans would move away from the user in a comical way. Before we started testing with the 3D scans, we worked with cylinders to make sure the collision was working. At this point, we were using the PlayerController prefab from the Oculus Integration package, which used the VR controllers to navigate around.

Once Kyle had turned people 3D scans into rigged models, I replaced the cylinders with the scan, and the collision was proven to be successful again. I had written a script that was attached to the player that detected when the player’s collider was hit by the 3D scans. This would trigger Kyle’s scripts on the 3D scans to be pushed away, go into ragdoll physics, and eventually, get back up again.

UNEXPECTED INTERACTION FROM TESTING AND COLLIDER TRACKING

Player Collision

After the locomotion problem was solved and we switched to OVRCameraRig instead of the PlayerController prefab, collisions between the VR player and 3D scanned people stopped working. I spent a lot of time testing in Unity by adding scripts and rearranging the hierarchy of the player as well as looking up answers online. This led to some funny VR interactions, including the spinning 3D scan video above.

Eventually, I realised the problem was that the collider on the OVRCameraRig would not move with the player in the VR experience; instead, the collider would stay at the starting spot by the door. I found this out by placing a red capsule on the OVRCameraRig that could be seen when the VR experience was happening; however, the problem was still not solved.

At the end, we realised we should switch from using the OVRCameraRig to the OVRPlayerController. The OVRPlayerController also contained the OVRCameraRig, but the different components on the OVRPlayerController allowed the colliders in it to follow the player when the player moved. We ended up placing a capsule collider on the CenterEyeAnchor that would start the colliding interactions.